Agentic AI

AI Security

AI Security Incidents

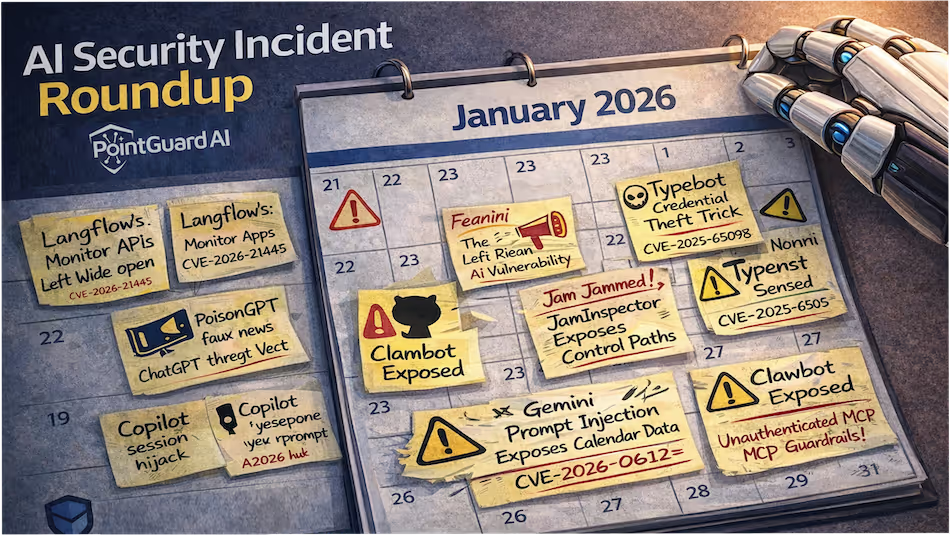

AI Security Incident Roundup: April 2026

Agents Got Bold, Vendors Got Hit, and Identity Stayed the Weak Link

Agentic AI

AI Security

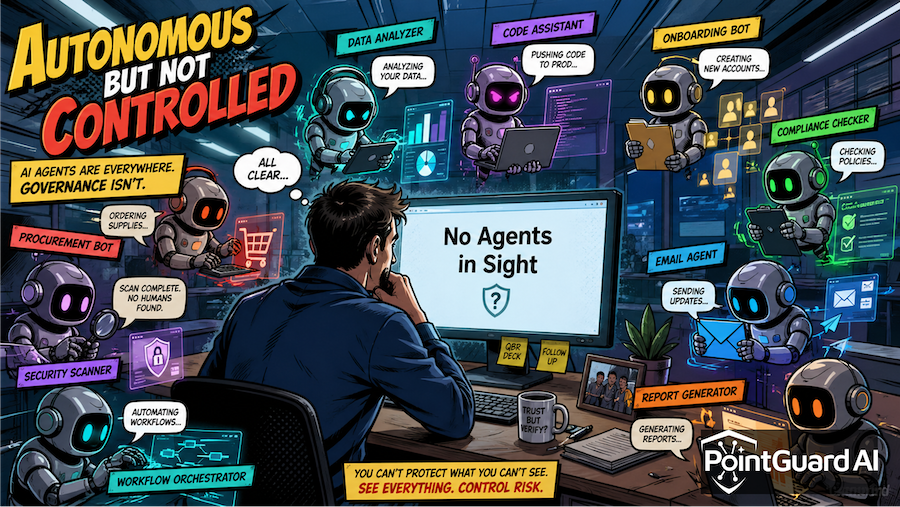

Autonomous but Not Controlled: CSA Report Reveals a Serious Security Gap

Why AI Agent Security Has Become an Urgent Issue

Agentic AI

AI Security

Governance & Compliance

Software Supply Chain

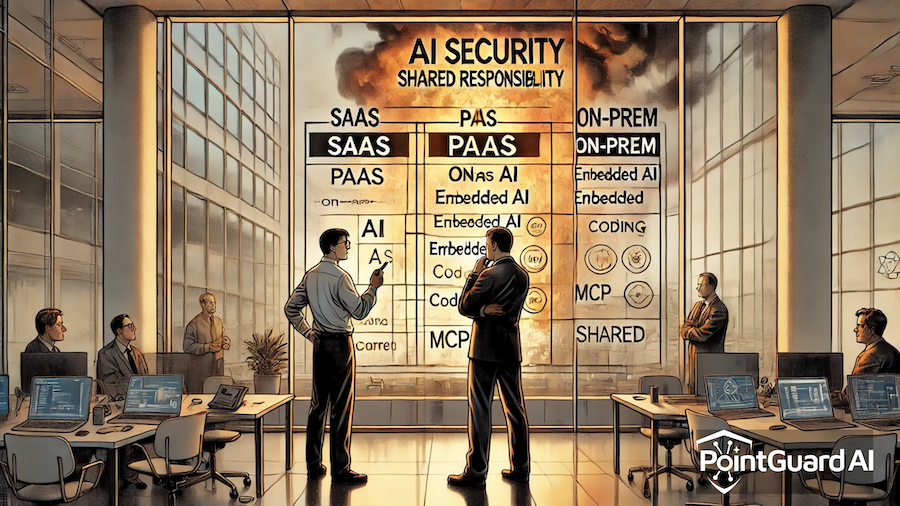

Debating AI Security Shared Responsibility While the House is On Fire

AI attacks won’t wait while organizations sort out responsibility

Agentic AI

AI Security

AI Agent Traps: Exposing the Agentic Attack Surface

How hidden inputs and tools are used to manipulate autonomous AI agents

Agentic AI

AI Security

Claude Code Leak: An AI Security Wake-Up Call

Recent AI incidents show risk accelerating faster than security

Events

Agentic AI

AI Security

RSAC 2026 Day 1: Security Must Evolve at Agentic Speed

AI-driven threats demand faster, context-aware security beyond human limits

AI Security

Security Best Practices

MCP Breaks Zero Trust. Here’s How to Fix It.

AI agents create a backdoor bypassing existing zero-trust security

Agentic AI

AI Security

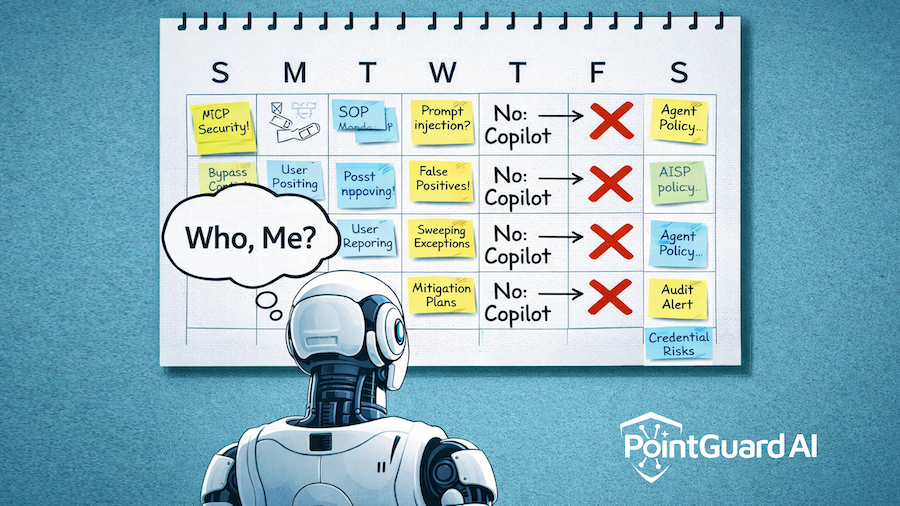

Why “No Copilot Fridays” Is a Real Security Warning

You can’t scale AI security on human vigilance alone

Agentic AI

AI Security Incidents

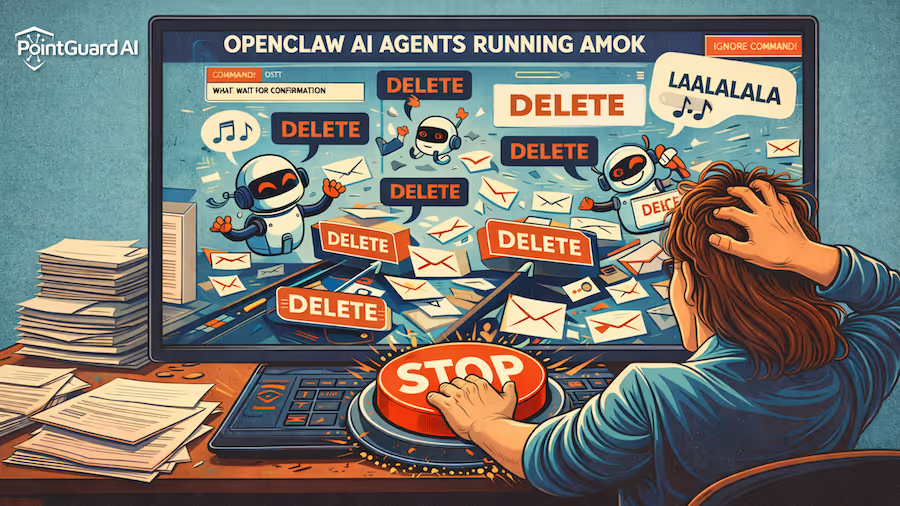

If You Love Your Agents, Don’t Set Them Free: OpenClaw Agents Run Amok in Meta Incident

Why autonomy without guardrails is a serious enterprise risk