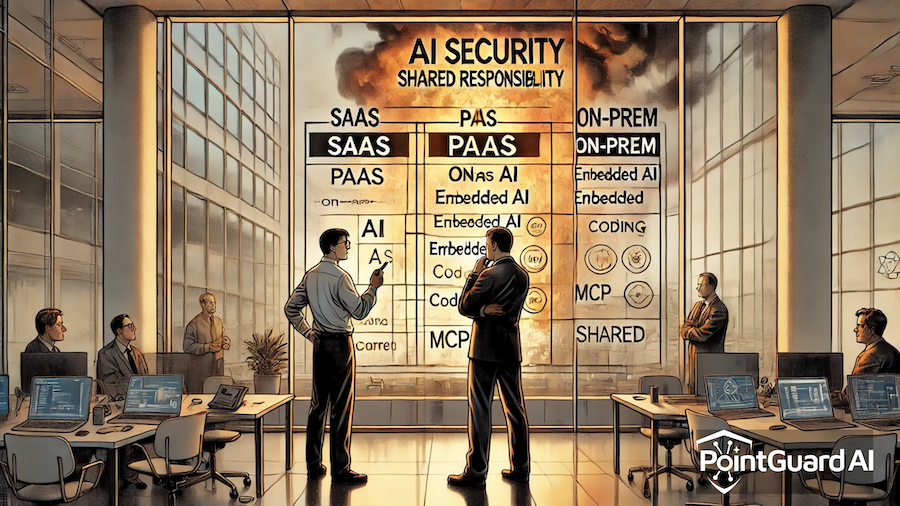

Much of the current discourse focuses on protocols—how agents connect to tools, how Model Context Protocol (MCP) works, and how to secure those integrations. But that framing misses the real issue. As one recent SC World perspective argues, MCP isn’t fundamentally a protocol problem—it’s an identity crisis that few organizations are treating as such. (SC Media)

And that distinction matters more than most teams realize.

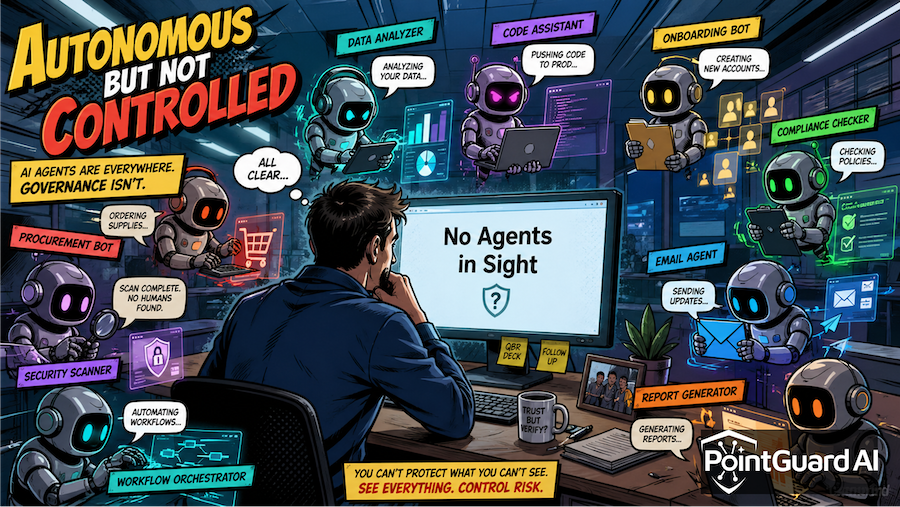

The Rise of Agentic Systems—and Invisible Identities

Agentic AI represents a fundamental shift in how software operates. These systems are not passive responders; they act, decide, and interact with enterprise infrastructure autonomously. Through MCP and similar frameworks, agents can query databases, modify records, call APIs, and orchestrate workflows across systems. (Cloud Security Alliance)

That capability is transformative. It’s also deeply destabilizing from a security standpoint.

Every agent is effectively a new identity—one that can operate at machine speed, across multiple systems, often with broad permissions. Yet most organizations are still treating these agents as extensions of applications rather than as first-class identities. This creates a dangerous gap.

Traditional identity and access management (IAM) was built around humans and service accounts. It assumes relatively predictable behavior, bounded activity, and clear ownership. Agentic systems break all three assumptions. They can generate thousands of actions per hour, dynamically change behavior based on context, and operate without a clearly accountable human owner. (News from generation RAG)

The result is an explosion of non-human identities (NHIs) that exist outside the visibility and control of existing security frameworks.

MCP Didn’t Create the Problem—It Exposed It

MCP has rapidly become the standard for connecting agents to tools—often described as “USB-C for AI.” Its adoption has been explosive, with thousands of servers connecting agents to databases, SaaS platforms, and cloud infrastructure. (clawmoat.com) But in enabling this connectivity, MCP has also exposed a critical weakness: most of these connections are not governed by robust identity controls.

In practice, that means:

- Agents operating with excessive or unclear permissions

- Tokens and credentials that are not lifecycle-managed

- Limited visibility into which agent performed which action

- Weak or missing authentication across MCP servers

Some analyses have found that a significant percentage of MCP servers lack proper authentication altogether, creating open pathways into sensitive systems.

Even when authentication exists, it is often shallow—focused on connectivity rather than intent. Agents may be authorized to access a system, but not evaluated on whether a specific action should be allowed in context. This is where the identity crisis becomes acute. Security teams are trying to secure actions without understanding the identity behind them.

The Real Risk: Actions Without Accountability

In traditional systems, identity provides accountability. You know who performed an action, what permissions they had, and whether that action aligns with policy. In agentic systems, that chain breaks down.

An agent may:

- Act on behalf of a user

- Combine data from multiple sources

- Trigger downstream actions across systems

- Operate asynchronously or persistently

Without a strong identity layer, these actions become difficult to trace, validate, or constrain. This is not theoretical. Recent MCP-related incidents and vulnerabilities have demonstrated how easily validation can be bypassed or how agent permissions can be exploited when controls are weak.

And as agent capabilities evolve—toward persistent, always-running assistants—the risk compounds. Agents that act proactively and operate in the background require continuous identity governance, not just point-in-time authentication. (Obot AI)

The core issue is simple: AI systems access data and perform actions through identities. If those identities are poorly defined or unmanaged, the entire system becomes insecure.

Why Identity Must Come First

The industry’s current approach often treats identity as an add-on—something to layer on after agents are deployed. That model no longer works.

In an agentic environment, identity is the control plane. It determines:

- What an agent is allowed to do

- On whose behalf it is acting

- How its actions are audited and constrained

- Whether its behavior aligns with policy and context

Without this foundation, other controls—prompt filtering, output validation, even network security—are reactive at best. The organizations that succeed in securing agentic AI will be those that treat identity as the primary security boundary, not an afterthought.

PointGuard AI: An Identity-First Approach to Agentic Security

This is where PointGuard AI takes a fundamentally different approach. Rather than focusing solely on protocols or integrations, PointGuard AI centers security on identity—treating every agent, tool interaction, and action as part of an identity-driven system.

At the core of this model is the PointGuard MCP Security Gateway, which enforces identity-first controls across agentic environments. (Learn more about the MCP Security Gateway.)

Strong Agent Authentication

Every agent is treated as a verifiable identity, not an implicit extension of an application. This ensures that all interactions begin with authenticated, accountable entities.

On-Behalf-Of (OBO) Tokenization

Agents frequently act on behalf of users. PointGuard enforces this relationship through OBO tokens, preserving the chain of identity and ensuring that actions can always be traced back to a human or originating system.

Tool-Level Authorization

Instead of granting broad access, PointGuard enforces fine-grained, tool-level authorization. Agents are not just authenticated—they are evaluated for each action they attempt to perform. This aligns access with intent, not just connectivity.

Runtime Guardrails and DLP

Identity alone is not sufficient without enforcement at runtime. PointGuard integrates guardrails and data loss prevention (DLP) controls to ensure that sensitive data is not exposed or misused during agent operations. These controls operate in real time, aligned with the identity context of each action. (Explore more on AI Runtime Protection.)

Continuous Discovery of AI Assets

One of the biggest challenges in agentic environments is visibility. New agents, tools, and integrations are constantly being introduced.

PointGuard continuously discovers and inventories AI assets, ensuring that all identities—human and non-human—are accounted for and governed.

From Identity Crisis to Identity Control

The agentic revolution is already underway. MCP and similar frameworks have solved the problem of connectivity. But connectivity without identity is not progress—it’s exposure.

The real challenge facing organizations today is not how to connect agents to systems. It’s how to govern the identities that those agents represent. Until identity becomes the foundation of agentic security, the gap will continue to widen.

PointGuard AI’s identity-first approach closes that gap—transforming agentic AI from an unmanaged risk into a controlled, auditable, and secure capability.

Because in the end, every agent action is an identity decision. And that’s where security has to start.